The second day of the Data and City Workshop (here are the notes from day 1) started with the session Data Models and the City.

Pouria Amirian started with Service Oriented Design and Polyglot Binding for Efficient Sharing and Analysing of Data in Cities. The starting point is that management of the city need data, and therefore technologies to handle data are necessary. In traditional pipeline, we start from sources, then using tools to move them to data warehouse, and then doing the analytics. The problems in the traditional approach is the size of data – the management of the data warehouse is very difficult, and need to deal with real-time data that need to answer very fast and finally new data types – from sensors, social media and cloud-born data that is happening outside the organisation. Therefore, it is imperative to stop moving data around but analyse them where they are. Big Data technologies aim to resolve these issues – e.g. from the development of Google distributed file system that led to Hadoop to similar technologies. Big Data relate to the technologies that are being used to manage and analyse it. The stack for managing big data include now over 40 projects to support different aspects of the governance, data management, analysis etc. Data Science is including many areas: statistics, machine learning, visualisation and so on – and no one expert can know all these areas (such expert exist as much as unicorns exist). There is interaction between data science researchers and domain experts and that is necessary for ensuring reasonable analysis. In the city context, these technologies can be used for different purposes – for example deciding on the allocation of bikes in the city using real-time information that include social media (Barcelona). We can think of data scientists as active actors, but there are also opportunities for citizen data scientists using tools and technologies to perform the analysis. Citizen data scientists need data and tools – such as visual analysis language (AzureML) that allow them to create models graphically and set a process in motion. Access to data is required to facilitate finding the data and accessing it – interoperability is important. Service oriented architecture (which use web services) is an enabling technology for this, and the current Open Geospatial Consortium (OGC) standards require some further development and changes to make them relevant to this environment. Different services can provided to different users with different needs [comment: but that increase in maintenance and complexity]. No single stack provides all the needs.

Next Mike Batty talked about Data about Cities: Redefining Big, Recasting Small (his paper is available here) – exploring how Big Data was always there: locations can be seen are bundles of interactions – flows in systems. However, visualisation of flows is very difficult, and make it challenging to understand the results, and check them. The core issue is that in N locations there are N^2 interactions, and the exponential growth with the growth of N is a continuing challenge in understanding and managing cities. In 1964, Brian Berry suggested a system on location, attributes and time – but temporal dimension was suppressed for a long time. With Big Data, the temporal dimension is becoming very important. An example of how understanding data is difficult is demonstrated with understanding travel flows – the more regions are included, the bigger the interaction matrix, but it is then difficult to show and make sense of all these interactions. Even trying to create scatter plots is complex and not helping to reveal much.

The final talk was from Jo Walsh titled Putting Out Data Fires; life with the OpenStreetMap Data Working Group (DWG) Jo noted that she’s talking from a position of volunteer in OSM, and recall that 10 years ago she gave a talk about technological determinism but not completely a utopian picture about cities , in which OpenStreetMap (OSM) was considered as part of the picture. Now, in order to review the current state of OSM activities relevant for her talk, she asked in the OSM mailing list for examples. She also highlighted that OSM is big, but it’s not Big Data- it can still fit to one PostGres installation. There is no anonymity in the system – you can find quite a lot about people from their activity and that is built into the system. There are all sort of projects that demonstrate how OSM data is relevant to cities – such as OSM building to create 3D building from the database, or use OSM in 3D modelling data such as DTM. OSM provide support for editing in the browser or with offline editor (JOSM). Importantly it’s not only a map, but OSM is also a database (like the new OSi database) – as can be shawn by running searches on the database from web interface. There are unexpected projects, such as custom clothing from maps, or Dressmap. More serious surprises are projects like the humanitarian OSM team and the Missing Maps projects – there are issues with the quality of the data, but also in the fact that mapping is imposed on an area that is not mapped from the outside, and some elements of colonial thinking in it (see Gwilym Eddes critique) . The InaSAFE project is an example of disaster modeling with OSM. In Poland, they extend the model to mark details of road areas and other details. All these are demonstrating that OSM is getting close to the next level of using geographic information, and there are current experimentations with it. Projects such as UTC of Mappa Marcia is linking OSM to transport simulations. Another activity is the use of historical maps – townland.ie .

The final talk was from Jo Walsh titled Putting Out Data Fires; life with the OpenStreetMap Data Working Group (DWG) Jo noted that she’s talking from a position of volunteer in OSM, and recall that 10 years ago she gave a talk about technological determinism but not completely a utopian picture about cities , in which OpenStreetMap (OSM) was considered as part of the picture. Now, in order to review the current state of OSM activities relevant for her talk, she asked in the OSM mailing list for examples. She also highlighted that OSM is big, but it’s not Big Data- it can still fit to one PostGres installation. There is no anonymity in the system – you can find quite a lot about people from their activity and that is built into the system. There are all sort of projects that demonstrate how OSM data is relevant to cities – such as OSM building to create 3D building from the database, or use OSM in 3D modelling data such as DTM. OSM provide support for editing in the browser or with offline editor (JOSM). Importantly it’s not only a map, but OSM is also a database (like the new OSi database) – as can be shawn by running searches on the database from web interface. There are unexpected projects, such as custom clothing from maps, or Dressmap. More serious surprises are projects like the humanitarian OSM team and the Missing Maps projects – there are issues with the quality of the data, but also in the fact that mapping is imposed on an area that is not mapped from the outside, and some elements of colonial thinking in it (see Gwilym Eddes critique) . The InaSAFE project is an example of disaster modeling with OSM. In Poland, they extend the model to mark details of road areas and other details. All these are demonstrating that OSM is getting close to the next level of using geographic information, and there are current experimentations with it. Projects such as UTC of Mappa Marcia is linking OSM to transport simulations. Another activity is the use of historical maps – townland.ie .

One of the roles that Jo play in OSM is part of the data working group, and she joined it following a discussion about diversity in OSM within the community. The DWG need some help, and their role is geodata thought police/Janitorial judicial service/social work arm of the volunteer fire force. DWG clean up messy imports, deal with vandalisms, but also deal with dispute resolutions. They are similar to volunteer fire service when something happens and you can see how the sys admins sparking into action to deal with an emerging issue. Example, someone from Ozbekistan saying that they found corruption with some new information, so you need to find out the changeset, asking people to annotate more, say what they are changing and why. OSM is self policing and self regulating – but different people have different ideas about what they are doing. For example, different groups see the view of what they want to do. There are also clashes between armchair mapping and surveying mappers – a discussion between someone who is doing things remotely, and the local person say that know the road and asking to change the editing of classification. DWG doesn’t have a legal basis, and some issues come up because of the global cases – so for example translated names that does not reflect local practices. There are tensions between commercial actors that do work on OSM compared to a normal volunteer mappers. OSM doesn’t have privileges over other users – so the DWG is recognised by the community and gathering authority through consensus.

The discussion that follows this session explored examples of OSM, there are conflicted areas such as Crimea nad other contested territories. Pouria explained that distributed computing in the current models, there are data nodes, and keeping the data static, but transferring the code instead of data. There is a growing bottleneck in network latency due to the amount of data. There are hierarchy of packaging system that you need to use in order to work with distributed web system, so tightening up code is an issue.

Rob – there are limited of Big Data such as hardware and software, as well as the analytics of information. The limits in which you can foster community when the size is very large and the organisation is managed by volunteers. Mike – the quality of big data is rather different in terms of its problem from traditional data, so while things are automated, making sense of it is difficult – e.g. tap in but without tap out in the Oyster data. The bigger the dataset, there might be bigger issues with it. The level of knowledge that we get is heterogeneity in time and transfer the focus to the routine. But evidence is important to policy making and making cases. Martijn – how to move the technical systems to allow the move to focal community practice? Mike – the transport modelling is based on promoting digital technology use by the funders, and it can be done for a specific place, and the question is who are the users? There is no clear view of who they are and there is wide variety, different users playing different roles – first, ‘policy analysts’ are the first users of models – they are domain experts who advise policy people. less thinking of informed citizens. How people react to big infrastructure projects – the articulations of the policy is different from what is coming out of the models. there are projects who got open and closed mandate. Jo – OSM got a tradition of mapping parties are bringing people together, and it need a critical mass already there – and how to bootstrap this process, such as how to support a single mapper in Houston, Texas. For cases of companies using the data while local people used historical information and created conflict in the way that people use them. There are cases that the tension is going very high but it does need negotiation. Rob – issues about data citizens and digital citizenship concepts. Jo – in terms of community governance, the OSM foundation is very hands off, and there isn’t detailed process for dealing with corporate employees who are mapping in their job. Evelyn – the conventions are matters of dispute and negotiation between participants. The conventions are being challenged all the time. One of the challenges of dealing with citizenship is to challenge the boundaries and protocols that go beyond the state. Retain the term to separate it from the subject.

The last session in the workshop focused on Data Issues: surveillance and crime

David Wood talked about Smart City, Surveillance City: human flourishing in a data-driven urban world. The consideration is of the smart cities as an archetype of the surveillance society. Especially trying to think because it’s part of Surveillance Society, so one way to deal with it is to consider resistance and abolishing it to allow human flourishing. His interest is in rights – beyond privacy. What is that we really want for human being in this data driven environment? We want all to flourish, and that mean starting from the most marginalised, at the bottom of the social order. The idea of flourishing is coming from Spinoza and also Luciano Floridi – his anti-enthropic information principle. Starting with the smart cities – business and government are dependent on large quant of data, and increase surveillance. Social Science ignore that these technology provide the ground for social life. The smart city concept include multiple visions, for example, a European vision that is about government first – how to make good government in cities, with technology as part of a wider whole. The US approach is about how can we use information management for complex urban systems? this rely on other technologies – pervasive computing, IoT and things that are weaved into the fabric of life. The third vision is Smart Security vision – technology used in order to control urban terrain, with use of military techniques to be used in cities (also used in war zones), for example biometrics systems for refugees in Afghanistan which is also for control and provision of services. The history going back to cybernetics and policing initiatives from the colonial era. The visions overlap – security is not overtly about it (apart from military actors). Smart Cities are inevitably surveillance cities – a collection of data for purposeful control of population. Specific concerns of researchers – is the targeting of people that fit a profile of a certain kind of people, aggregation of private data for profit on the expense of those that are involved. The critique of surveillance is the issue of sorting, unfair treatment of people etc. Beyond that – as discussed in the special issue on surveillance and empowerment– there are positive potentials. Many of these systems have a role for the common good. Need to think about the city within neoliberal capitalism, separate people in space along specific lines and areas, from borders to building. Trying to make the city into a tamed zone – but the danger parts of city life are also source for opportunities and creativity. The smart city fit well to this aspect – stopping the city from being disorderly. There is a paper from 1995 critique pervasive computing as surveillance and reduce the distance between us and things, the more the world become a surveillance device and stop us from acting on it politically. In many of the visions of the human in pervasive computing is actually marginalised. This is still the case. There are opportunities for social empowerment, say to allow elderly to move to areas that they stop exploring, or use it to overcome disability. Participation, however, is flawed – who can participate in what, where and how? additional questions are that participation in highly technical people is limited to a very small group, participation can also become instrumental – ‘sensors on legs’. The smart city could enable to discover the beach under the pavement (a concept from the situationists) – and some are being hardened. The problem is corporate ‘wall garden’ systems and we need to remember that we might need to bring them down.

Next Francisco Klauser talked about Michel Foucault and the smart city: power dynamics inherent in contemporary governing through code. Interested in power dynamics of governing through data. Taking from Foucault the concept of understanding how we can explain power put into actions. Also thinking about different modes of power: Referentiality – how security relate to governing? Normativity – looking at what is the norm and where it is came from? Spatiality – how discipline and security is spread across space. Discipline is how to impose model of behaviour on others (panopticon). Security work in another way – it is free things up within the limits. So the two modes work together. Power start from the study of given reality. Data is about the management of flows. The specific relevance to data in cities is done by looking at refrigerated warehouses that are used within the framework of smart grid to balance energy consumption – storing and releasing energy that is preserved in them. The whole warehouse has been objectified and quantified – down to specific product and opening and closing doors. He see the core of the control through connections, processes and flows. Think of liquid surveillance – beyond the human.

Finally, Teresa Scassa explored Crime Data and Analytics: Accounting for Crime in the City. Crime data is used in planning, allocation of resources, public policy making – broad range of uses. Part of oppositional social justice narratives, and it is an artefact of the interaction of citizen and state, as understood and recorded by the agents of the state operating within particular institutional cultures. Looking at crime statistics that are provided to the public as open data – derived from police files under some guidelines, and also emergency call data which made from calls to the policy to provide crime maps. The data that use in visualisation about the city is not the same data that is used for official crime statistics. There are limits to the data – institutional factors: it measure the performance of the police, not crime. It’s how police are doing their job – and there are lots of acts of ‘massaging’ the data by those that are observed. The stats are manipulated to produce the results that are requested. The police are the sensors, and there is unreporting of crime according to the opinion of police person – e.g. sexual assault, and also the privatisation of policing who don’t report. Crime maps are offered by private sector companies that sell analytics, and then provide public facing option – the narrative is controlled – what will be shared and how. Crime maps are declared as ‘public awareness or civic engagement’ but not transparency or accountability. Focus on property offence and not white collar one. There are ‘alternalytics’ – using other sources, such as victimisation survey, legislation, data from hospital, sexual assault crisis centres, and crowdsourcing. Example of the reporting bottom up is harrassmap to report cases that started in Egypt. Legal questions are how relationship between private and public sector data affect ownership, access and control. Another one is how the state structure affect data comparability and interoperability. Also there is a question about how does law prescribe and limit what data points can be collected or reported.

The session closed with a discussion that explored some examples of solutionism like crowdsourcing that ask the most vulnerable people in society to contribute data about assault against them which is highly problematic. The crime data is popular in portals such as the London one, but it is mixed into multiple concerns such as property price. David – The utopian concept of platform independence, and assuming that platforms are without values is inherently wrong.

The workshop closed with a discussion of the main ideas that emerged from it and lessons. How are all these things playing out. Some questions that started emerging are questions on how crowdsourcing can be bottom up (OSM) and sometime top-down, with issues about data cultures in Citizen Science, for example. There are questions on to what degree the political aspects of citizenship and subjectivity are playing out in citizen science. Re-engineering information in new ways, and rural/urban divide are issues that bodies such as Ordnance Survey need to face, there are conflicts within data that is an interesting piece, and to ensure that the data is useful. The sensors on legs is a concept that can be relevant to bodies such as Ordnance Survey. The concept of stack – it also relevant to where we position our research and what different researchers do: starting from the technical aspects to how people engage, and the workshop gave a slicing through these layers. An issue that is left outside is the business aspect – who will use it, how it is paid. We need the public libraries with the information, but also the skills to do things with these data. The data economy is important and some data will only produced by the state, but there are issues with the data practices within the data agencies within the state – and it is not ready to get out. If data is garbage, you can’t do much with it – there is no economy that can be based on it. An open questions is when data produce software? when does it fail? Can we produce data with and without connection to software? There is also the physical presence and the environmental impacts. Citizen engagement about infrastructure is lacking and how we tease out how things open to people to get involved. There was also need to be nuanced about the city the same way that we focused on data. Try to think about the way the city is framed: as a site to activities, subjectivity, practices; city as a source for data – mined; city as political jurisdiction; city as aspiration – the city of tomorrow; city as concentration of flows; city as a social-cultural system; city as a scale for analysis/ laboratory. The title and data and the city – is it for a city? Back to environmental issues – data is not ephemeral and does have tangible impacts (e.g. energy use in blockchain, inefficient algorithms, electronic WEEE that is left in the city). There are also issues of access and control – huge volumes of data. Issues are covered in papers such as device democracy. Wider issues that are making link between technology and wider systems of thought and considerations.

The final talk was from Jo Walsh titled Putting Out Data Fires; life with the OpenStreetMap Data Working Group (DWG) Jo noted that she’s talking from a position of volunteer in OSM, and

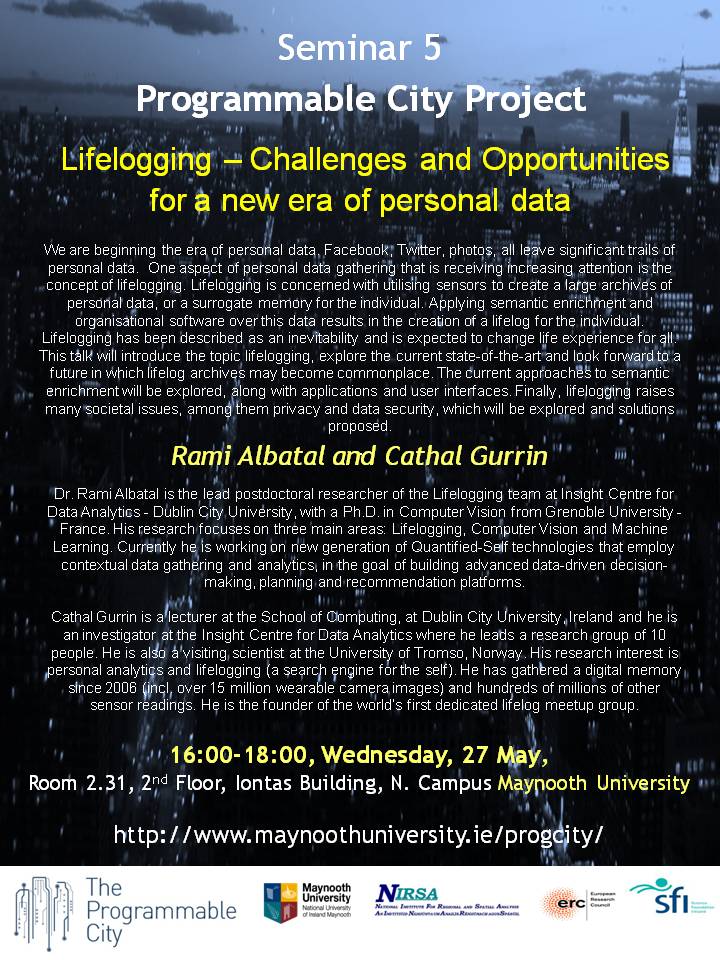

The final talk was from Jo Walsh titled Putting Out Data Fires; life with the OpenStreetMap Data Working Group (DWG) Jo noted that she’s talking from a position of volunteer in OSM, and  The workshop, which is part of the Programmable City project

The workshop, which is part of the Programmable City project