Predictive analytics is a way of responding to and taking advantage of historical and emerging, large datasets by using “a variety of statistical, modeling, data mining, and machine learning techniques … to to make predictions about the future”. One of the advantages of drawing predictions from big data, according to the vision of social physics, is that

You can write down equations that predict what people will do. That’s the huge change. So I have been running the big data conversation … It’s about the fact that you can now understand customers, employees, how we organise, in a quantitative, predictive way for the first time.

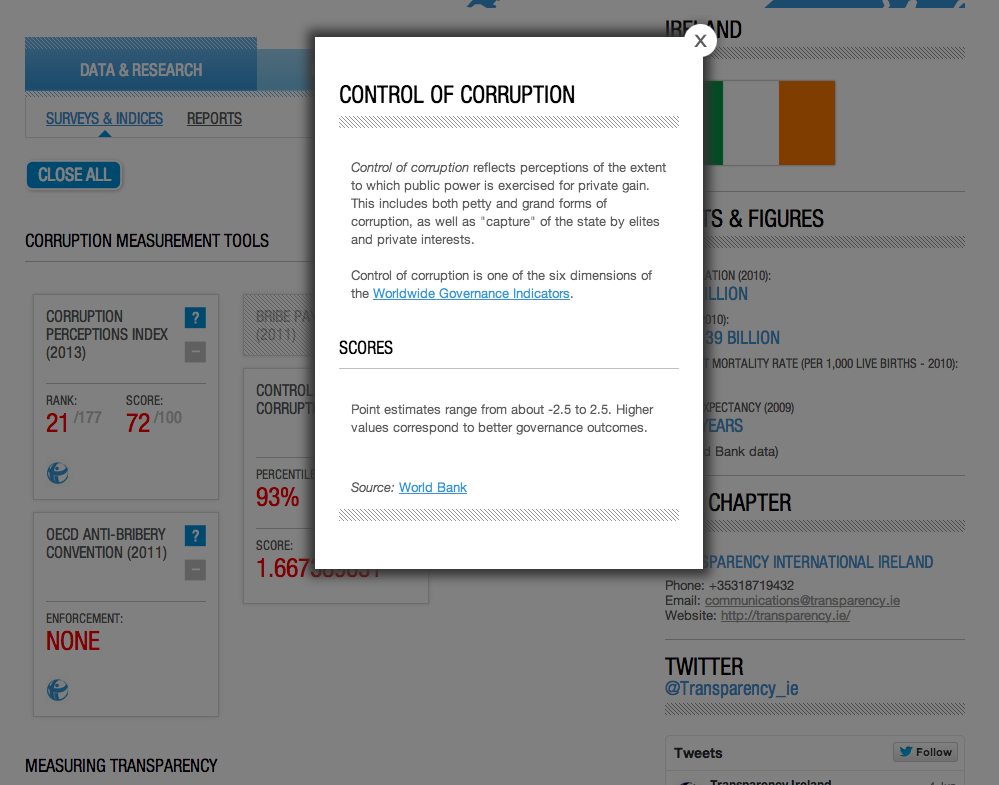

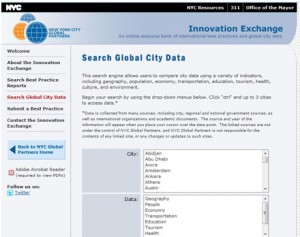

Predictive analytics is fervently discussed in the business world, if not fully taken up, and increasingly by public services, governments or medical practices to exploit the value hidden in the public archive or even in social media. In New York for example, there is a geek squad to Mayor’s office, seeking to uncover deep and detailed relationships between the people living there and the government, and at the same time realising “how insanely complicated this city is”. In there, an intriguing question remains as to the effectiveness of predictive analytics, the extent to which it can support and facilitate urban life and the consequences to the cities that are immersed in a deep sea of data, predictions and humans.

Let’s start with an Australian example. The Commonwealth Scientific and Industrial Research Organisation (CSIRO) has partnered with Queensland Health, Griffith University and Queensland University of Technology and developed the Patient Admission Prediction Tool (PAPT) to estimate the presentations of schoolies, leavers of Australian high schools going on week-long holidays after final examines, to nearby hospitals. The PAPT derives their estimates from Queensland Health data on schoolies presentations in previous years, including statistics about the numbers of presentations, parts of the body injured and types of these injuries. Using the data, the PAPT benefits hospitals, their employees and patients by improved scheduling of hospital beds, procedures and staff, with the potential of saving $23 million per annum if implemented in hospitals across Australia. As characterised by Dr James Lind, one of the benefits of adapting predictive analytics is the proactive rather than reactive approaches towards planning and management:

People like working in a system that is proactive rather than reactive. When we are expecting a patient load everyone knows what their jobs [are], and you are more efficient with your time.

The patients are happy too, because they receive and finish treatment quickly:

Can we find such success when predictive analytics is practised in various forms of urban governance? Moving the discussion back to US cities again and using policing as an example. Policing work is shifting from reactive to proactive in many cities, in experimental or implementation stages. PredPol is predictive policing software produced by a startup company and has caught considerable amount of attention from various police departments in the US and other parts of the world. Their success as a business, however, is partly to do with by their “energetic” marketing strategies, contractual obligations of referring the startup company to other law enforcement agencies, and so on.

Above all, claims of success shown by the company are difficult to sustain in closer examination. The subjects of the analytics that the software focuses are very specific: burglaries, robberies, vehicle thefts, thefts from vehicles and gun crimes. In other words, the crimes that have “plenty of data to chew on” for making predictions, and are of the opportunistic crimes which are easier to prevent by the presence of the patrolling police (more details here).

This further brings us to the issue of the “proven” and “continued” aspects of success. These are even more difficult and problematic aspects of policing work for the purpose of evaluating and “effectiveness” and “success” of predictive policing. To prove that an algorithm performs well, expectations for which an algorithm is built and tweaked have to be specified, not only for those who build the algorithm, but also for people who will be entangled in the experiments in intended and unintended ways. In this sense, transparency and objectivity related to predictive policing are important. Without acknowledging, considering and evaluating how both the crimes and everyday life, or both normality and abnormality, are transformed into algorithms and disclosing them for validation and consultation, a system of computational criminal justice can turn into, if not witchhunting, alchemy – let’s put two or more elements into a magical pot, stir them and see what happens! This is further complicated by knowing that there are already existing and inherent inequalities in crime data, such as reporting or sentencing, and the perceived neutrality of algorithms can well justify cognitive biases that are well documented in justice system, biases that could justify the rational that someone should be treated more harshly because the person is already on the black list, without reconsidering how the person gets onto such list in the first place. There is an even darker side of predictive policing when mundane social activities are constantly treated as crime data when using social network analysis to profile and incriminate groups and grouping of individuals. This is also a more dynamic and contested field of play considering that while crime prediction practitioners (coders, private companies, government agencies and so on) appropriate personal data and private social media messages for purposes they are not intended for, criminals (or activists for that matter) play with social media, if not yet prediction results obtained by the reverse engineering of algorithms, to plan coups, protests, attacks, etc.

For those who want to look further into how predictive policing is set up, proven, run and evaluated, there are ways of opening up the black box, at least partially, for critically reflecting upon what exactly it could achieve and how the “success” is managed both in computer simulation and in police practices. The Chief scientist of PredPol gave a lecturer where, as pointed out:

He discusses the mathematics/statistics behind the algorithm and, at one point, invites the audience not to take his word for it’s accuracy because he is employed by PredPol, but to take the equations discussed and plug in crime data (e.g. Chicago’s open source crime data) to see if the model has any accuracy.

The video of the lecturer is here

Furthermore, RAND provides a review of predictive policing methods and practices across many US cities. The report can be found here and analyses the advantages gained by various crime prediction methods as well as their limitations. Predictive policing as shown in the report is far from a crystal ball, and has various levels of complexity to run and implement, mathematically, computationally and organisationally. Predictions can range from crime mapping to predicting crime hotspots when given certain spatiotemporal characteristics of crimes (see a taxonomy in p. 19). As far as prediction are concerned, they are good as long as crimes in the future look similar to the ones in the past – their types, temporality and geographic prevalence, if the data is good, which is a big if!. Also, predictions are good when they are further contextualised. Compared with predicting crimes without any help (not even from the intelligence that agents in the field can gather), applying mathematics to help in a guessing game creates a significant advantage, but the differences among these methods are not as dramatic. Therefore, one of the crucial messages intended by reviewing and contextualising predictive methods is that:

It is important to bear in mind that the predictive methods discussed here do not predict where and when the next crime will be committed. Rather, they predict the relative level of risk that a crime will be associated with a particular time and place. The assumption is always that the past is prologue; predictions are made based on the analysis of past data. If the criminal adapts quickly to police interventions, then only data from the recent past will be useful to police departments. (p. 55)

Therefore, however automated, human and organisational efforts are still required in many areas in practice. Activities such as finding relevant data, preparing them for analysis, tweaking factors, variables and parameters, all require human efforts, collaboration as a team and transforming decisions into actions for reducing crimes at organisational levels. Similarly, human and organisational efforts are again needed when types and patterns of crimes are changing, targeted crimes shift, results are to be interpreted and integrated in relation to changing availabilities of resources.

Furthermore, the report reviews the issues of privacy, transparency, trust and civil liberties within existing legal and policy frameworks. However, it becomes apparent that predictions and predictive analytics need careful and mindful designs, responding to emerging ethical, legal and social issues (ELSI) when the impacts of predictive policing occur at individual and organisational levels, affecting the day-to-day life of residents, communities and frontline officers. While it is important to maintain and revisit existing legal requirements and frameworks, it is also important to respond to emerging information and data practices, and notions of “obscurity by design” and “prodecural data due processes” are ways of rethinking and designing relationships between privacy, data, algorithms and predictions. Even the term transparency needs further reflections to make progress on issues concerning what it means under the context of predictive analytics and how it can be achieved by taking into account renewed theoretical, ethical, practical and legal considerations. Under this context, “transparent predictions” is proposed wherein the importance and potential unintended consequences are outlined with regards to rendering prediction processes interpretable to humans and driven by causations rather than correlations. Critical reflections on such a proposal are useful, for example this two part series – (1)(2), further contextualising transparency both in prediction precesses and case-specific situations.

Additionally, IBM has partnered with New York Fire Department and Lisbon Fire Brigade. The main goal is to use predictive analytics to make smart cities safer by using the predictions to better and more effectively allocate emergency response resources. Similarly, crowd behaviours have already been simulated for understanding and predicting how people would react in various crowd events in places such as major traffic and travel terminals, sports and concert venues, shopping malls and streets, busy traffic areas, etc. Simulation tools take into account data generated by sensors, as well as quantified embodied actions, such as walking speeds, body sizes or disabilities, and it is not difficult to imagine that more data and analysis could take advantage of social media data where sentiments and psychological aspects are expected to refine simulation results (a review of simulation tools).

To bring the discussion to a pause, data, algorithms and predictions are quickly turning not only cities but also many other places into testbeds, as long as there are sensors (human and nonhuman) and the Internet. Data will become available and different kinds of tests can then be run to verify ideas and hypotheses. As many articles have pointed out, data and algorithms are flawed, revealling and reinforcing unequal parts and aspects of cities and city lives. Tests and experiments, such as manipulating user emtions by Facebook in their experiments, can make cities vulnerable too, when they are run without regards to embodied and emotionally chared humans. Therefore, there is a great deal more to say about data, algorithms and experiments, because the production of data and experiments of making use of them are always an intervention rather than evaluation. We will be coming back to these topics in subsequent posts.