Rob provides an overview of The Programmable City project in this launch video, which includes the ideas underpinning the research and the prospective case studies. Here are links to the slides the complete program.

Programmable City Launch Presentations

Below are the Programmable City Project Launch Presentations. The presentations are much more dynamic as some are animated while others include videos, but alas, sharing makes them a little static. The videos will soon be available, and watching them live will provide you with more of a sense of what the talks were about. Here is the full program.

Introduction: The Programmable City Project

- Rob Kitchin, PI Programmable City Project, NIRSA, NUIM

Session 1: Software and cities

- Matt Wilson (Harvard), Quantified Self-City-Nation

- Martin Dodge (Department of Geography, University of Manchester), Code and Conveniences

Session 2: Data and cities

- Tim Reardon (Assistant Director of Data Services, MAPC, Boston), Putting Data to Work in Metro Boston

- Tracey P. Lauriault (Programmable City team), A genealogy of open data assemblages

Session 3: Smart Cities

- Siobhan Clarke (Trinity College Dublin), ICT-Enabled Behavioural Change in Smart Cities

- Adam Greenfield (London School of Economics), Another city is possible: Networked urbanism from above and below? Visit Adam’s Website UrbanScale

Session 4: Programmable City PhD/Postdoc projects & Gavin McArdle – Dublin City Dashboard Demo

- Robert Bradshaw, Smart Bikeshare

- Dr Sophia Maalsen, How are discourses and practices of city governance translated into code?

- Jim Merricks White, Towards a Digital Urban Commons:Developing a situated computing praxis for a more direct democracy

- Alan Moore, The Role of Dublin in the Global Innovation Network of Cloud Computing

- Dr Leighton Evans, How does software alter the forms and nature of work?

- Darach Mac Donncha, ‘How software is discursively produced and legitimised by vested interests’

- Dr Sung-Yueh Perng, Programming Urban Lives

- Dr Gavin McArdle, NCG, NIRSA, NUIM, Dublin Dashboard Performance Indicators & Metrics

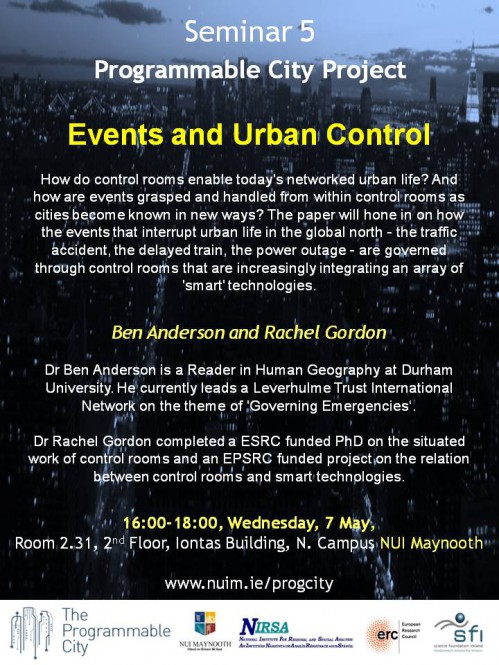

Seminar: Events and Urban Control by Ben Anderson and Rachel Gordon, 7 May

The fifth Programmable City seminar will take place on May 7th. Based on some detailed ethnographic work, the paper will focus on the workings of control rooms in governing events.

Events and Urban Control

Ben Anderson and Rachel Gordon

Time: 16:00 – 18:00, Wednesday, 7 May, 2014

Venue: Room 2.31, 2nd Floor Iontas Building, North Campus NUI Maynooth (Map)

Abstract

How do control rooms enable today’s networked urban life? And how are events grasped and handled from within control rooms as cities become known in new ways? The paper will hone in on how the events that interrupt urban life in the global north – the traffic accident, the delayed train, the power outage – are governed through control rooms; control rooms that are increasingly integrating an array of ‘smart’ technologies. Control rooms are sites for detecting and diagnosing events, where action to manage events is initiated in the midst multiple forms of ambiguity and uncertainty. By focusing on the work of control rooms, the paper will ask what counts as an event of interruption or disruption and trace how forms of control are enacted.

About the speakers

Dr Ben Anderson is a Reader in Human Geography at Durham University. Recently, he has become fascinated by how emergencies are governed and how emergencies govern. He currently leads a Leverhulme Trust International Network on the theme of ‘Governing Emergencies’, and is conducting a geneaology of the government of and by emergency supported by a 2012 Philip Leverhulme Prize. Previous research has explored the implications of theories of affect and emotion for contemporary human geography. This work will be published in a monograph in 2014: Encountering Affect: Capacities, Apparatuses, Conditions (Ashgate, Aldershot). He is also co-editor (with Dr Paul Harrison) of Taking-Place: Non-Representational Theories and Geography (2010, Ashgate, Aldershot).

Dr Rachel Gordon completed a ESRC-Funded PhD on the situated work of control rooms, with particular reference to transport systems and to how control rooms deal with complex urban systems. She currently coordinates an Leverhulme Trust international network on Governing Emergencies, after completing an EPSRC funded project on the relation between control rooms and smart technologies.

Writing for impact: how to craft papers that will be cited

For the past few years I’ve co-taught a professional development course for doctoral students on completing a thesis, getting a job, and publishing. The course draws liberally on a book I co-wrote with the late Duncan Fuller entitled, The Academics’ Guide to Publishing. One thing we did not really cover in the book was how to write and place pieces that have impact, rather providing more general advice about getting through the peer review process.

The general careers advice mantra of academia is now ‘publish or perish’. Often what is published and its utility and value can be somewhat overlooked — if a piece got published it is assumed it must have some inherent value. And yet a common observation is that most journal articles seem to be barely read, let alone cited.

Both authors and editors want to publish material that is both read and cited, so what is required to produce work that editors are delighted to accept and readers find so useful that they want to cite in their own work?

A taxonomy of publishing impact

The way I try and explain impact to early career scholars is through a discussion of writing and publishing a paper on airport security (see Figure 1). Written pieces of work, I argue, generally fall into one of four categories, with the impact of the piece rising as one traverses from Level 1 to Level 4.

Level 1: the piece is basically empiricist in nature and makes little use of theory. For example, I could write an article that provides a very detailed description of security in an airport and how it works in practice. This might be interesting, but would add little to established knowledge about how airport security works or how to make sense of it. Generally, such papers appear in trade magazines or national level journals and are rarely cited.

Level 2: the paper uses established theory to make sense of a phenomena. For example, I could use Foucault’s theories of disciplining, surveillance and biopolitics to explain how airport security works to create docile bodies that passively submit to enhanced screening measures. Here, I am applying a theoretical frame that might provide a fresh perspective on a phenomena if it has not been previously applied. I am not, however, providing new theoretical or methodological tools but drawing on established ones. As a consequence, the piece has limited utility, essentially constrained to those interested in airport security, and might be accepted in a low-ranking international journal.

Level 3: the paper extends/reworks established theory to make sense of phenomena. For example, I might argue that since the 1970s when Foucault was formulating his ideas there has been a radical shift in the technologies of surveillance from disciplining systems to capture systems that actively reshape behaviour. As such, Foucault’s ideas of governance need to be reworked or extended to adequately account for new algorithmic forms of regulating passengers and workers. My article could provide such a reworking, building on Foucault’s initial ideas to provide new theoretical tools that others can apply to their own case material. Such a piece will get accepted into high-ranking international journals due to its wider utility.

Level 4: uses the study of a phenomena to rethink a meta-concept or proposes a radically reworked or new theory. Here, the focus of attention shifts from how best to make sense of airport security to the meta-concept of governance, using the empirical case material to argue that it is not simply enough to extend Foucault’s thinking, rather a new way of thinking is required to adequately conceptualize how governance is being operationalised. Such new thinking tends to be well cited because it can generally be applied to making sense of lots of phenomena, such as the governance of schools, hospitals, workplaces, etc. Of course, Foucault consistently operated at this level, which is why he is so often reworked at Levels 2 and 3, and is one of the most impactful academics of his generation (cited nearly 42,000 time in 2013 alone). Writing a Level 4 piece requires a huge amount of skill, knowledge and insight, which is why so few academics work and publish at this level. Such pieces will be accepted into the very top ranked journals.

One way to think about this taxonomy is this: generally, those people who are the biggest names in their discipline, or across disciplines, have a solid body of published Level 3 and Level 4 material — this is why they are so well known; they produce material and ideas that have high transfer utility. Those people who well known within a sub-discipline generally have a body of Level 2 and Level 3 material. Those who are barely known outside of their national context generally have Level 1/2 profiles (and also have relatively small bodies of published work).

In my opinion, the majority of papers being published in international journals are Level 2/borderline 3 with some minor extension/reworking that has limited utility beyond making sense of a specific phenomena, or Level 3/borderline 2 with narrow, timid or uninspiring extension/reworking that constrains the paper’s broader appeal. Strong, bold Level 3 papers that have wider utility beyond the paper’s focus are less common, and Level 4 papers that really push the boundaries of thought and praxis are relatively rare. The majority of articles in national level journals tend to be Level 2; and the majority of book chapters in edited collections are Level 1 or 2. It is not uncommon, in my experience, for authors to think the paper that they have written is category above its real level (which is why they are often so disappointed with editor and referee reviews).

Does this basic taxonomy of impact work in practice?

I’ve not done a detailed empirical study, but can draw on two sources of observations. First, my experience as an editor two international journals (Progress in Human Geography, Dialogues in Human Geography), and for ten years an editor of another (Social and Cultural Geography), and viewing download rates and citational analysis for papers published in those journals. It is clear from such data that the relationship between level and citation generally holds — those papers that push boundaries and provide new thinking tend to be better cited. There are, of course, some exceptions and there are no doubt some Level 4 papers that are quite lowly cited for various reasons (e.g,, their arguments are ahead of their time), but generally the cream rises. Most academics intuitively know this, which is why the most consistent response of referees and editors to submitted papers is to provide feedback that might help shift Level 2/borderline Level 3 papers (which are the most common kind of submission) up to solid Level 3 papers – pieces that provide new ways of thinking and doing and provide fresh perspectives and insights.

Second, by examining my own body of published work. Figure 2 displays the citation rates of all of my published material (books, papers, book chapters) divided into the four levels. There are some temporal effects (such as more recently published work not having had time to be cited) and some outliers (in this case, a textbook and a coffee table book) but the relationship is quite clear, especially when just articles are examined (Figure 3) — the rate of citation increases across levels. (I’ve been fairly brutally honest in categorising my pieces and what’s striking to me personally is proportionally how few Level 3 and 4 pieces I’ve published, which is something for me to work on).

So what does this all mean?

Basically, if you want your work to have impact you should try to write articles that meet Level 3 and 4 criteria — that is, produce novel material that provides new ideas, tools, methods that others can apply in their own studies. Creating such pieces is not easy or straightforward and demands a lot of reading, reflection and thinking, which is why it can be so difficult to get papers accepted into the top journals and the citation distribution curve is so heavily skewed, with a relatively small number of pieces having nearly all the citations (Figure 4 shows the skewing for my papers; my top cited piece has the same number of cites as the 119 pieces with the least number).

In my experience, papers with zero citations are nearly all Level 1 and 2 pieces. That’s not the only kind of papers you should be striving to publish if you want some impact from your work.

Rob Kitchin

Slides for "Urban indicators, city benchmarking, and real-time dashboards" talk

The Programmable City team delivered four papers at the Conference of the Association of American Geographers held in Tampa, April 8-12. Here are the slides for Kitchin, R., Lauriault, T. and McArdle, G. (2014). “Urban indicators, city benchmarking, and real-time dashboards: Knowing and governing cities through open and big data” delivered in the session “Thinking the ‘smart city’: power, politics and networked urbanism II” organized by Taylor Shelton and Alan Wiig. The paper is a work in progress and was the first attempt at presenting work that is presently being written up for submission to a journal. No doubt it’ll evolve over time, but the central argument should hold.

Short presentation on the need for critical data studies

At the recent Conference of the Association of American Geographers held in Tampa, April 8-12, I was asked to be a discussant on a set of three sessions concerning geographers engagement with big data. The first session was a general intro panel to big data from a geographical perspective, the second panel consisted of a set of a dozen or so short lightening talks (no more than 5 mins each) about each speaker’s on-going research, and the third panel presented some demos of practical approaches researchers are making to harvesting, curating and sharing big geo-data.

Rather than focus my discussion on the individual comments, papers and demos, I reflected more broadly on the presentations, which I felt had been overly focused on one particular kind of big data, namely social media, with a little crowdsourcing thrown in, and had done so from a standpoint that was overly technical or quite narrowly conceived in conceptual terms. My argument was that we need to help develop, along with other social science disciplines, critical data studies (a term borrowed from Craig Dalton and Jim Thatcher) that fully appreciate and uncover the complex assemblages that produce, circulate, share/sell and utilise data in diverse ways and recognize the politics of data and the diverse work that they do in the world. This also requires a critical examination of the ontology of big data and its varieties which extend well beyond social media to include various forms of digital and automated surveillance, techno-social systems of work, exhaust from digital devices, sensors, scanners, the internet of things, interaction and transactional data, sousveillance, and various modes of volunteered data. As well as a thorough consideration of its technical and organisational shortcomings/issues, its associated politics and ethics, and its consequences for the epistemologies, methodologies and practices of academia and various domains of everyday life. I concluded with a call for more synoptic, conceptual and normative analysis of big data, as well as detailed empirical research that examine all aspects of big data assemblages. In other words, I was advocating for a more holistic and critical analysis of big data. Given the speed at which the age of big data is coming into being, such analyses in my view are very much needed to make sense of the changes occuring.

For another reflection on the sessions see Mark Graham’s comments on Zero Geography.

Rob Kitchin